Master of Engineering, Robotics

University of Maryland, College Park, MD

CGPA: 3.84 / 4.0

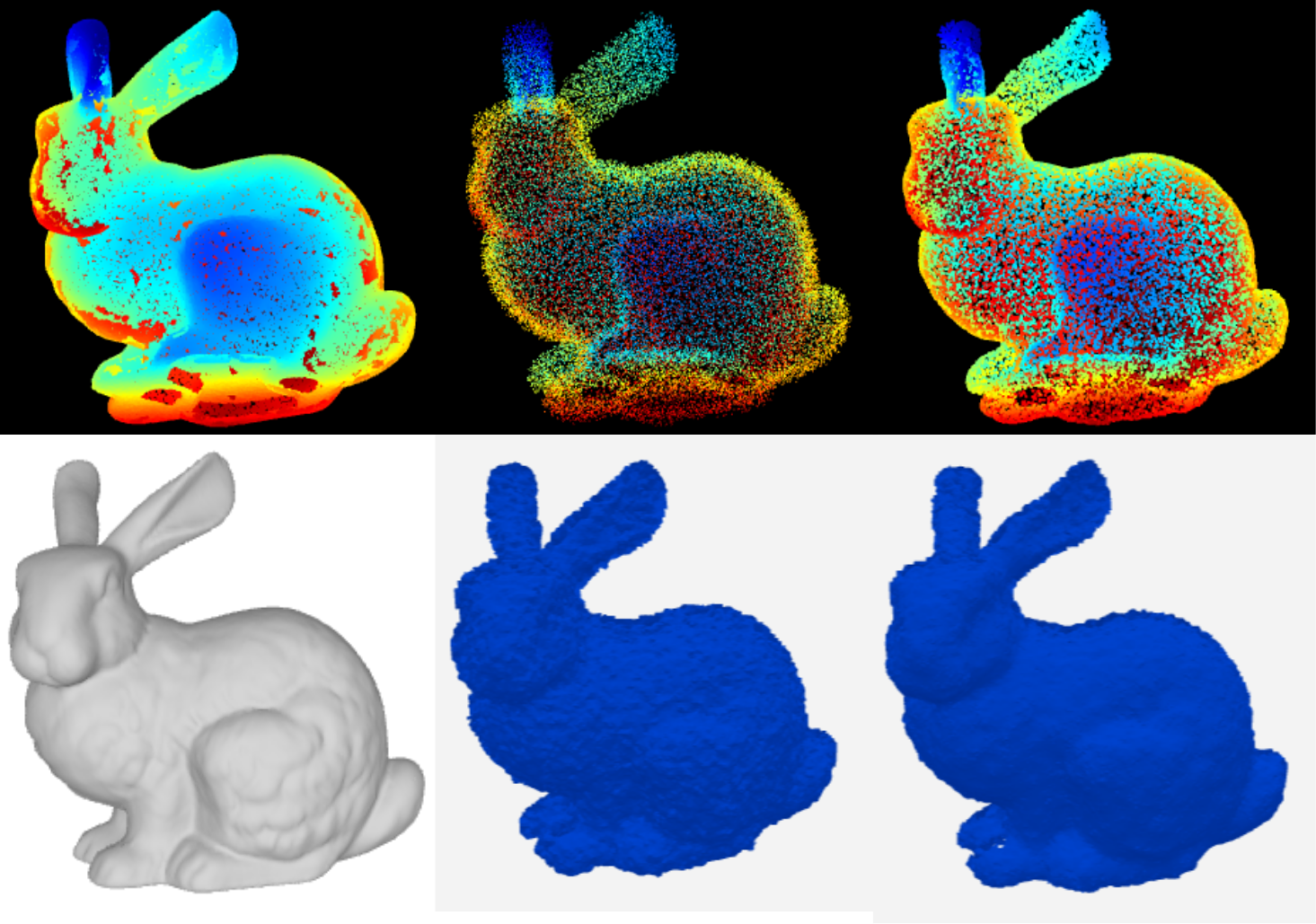

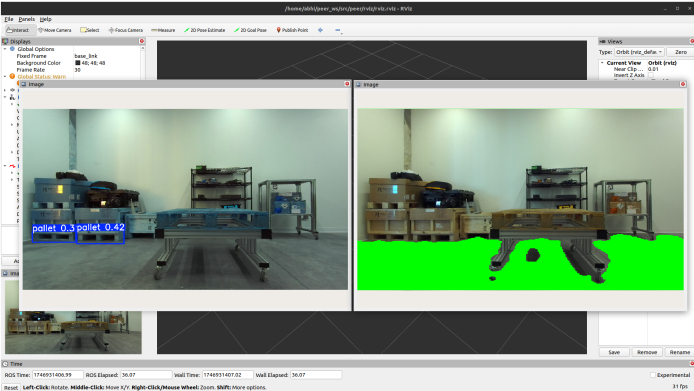

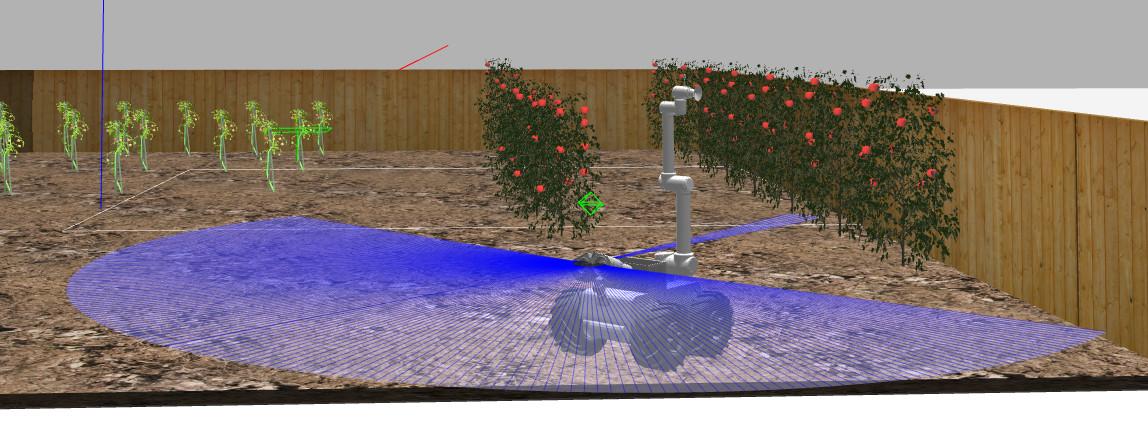

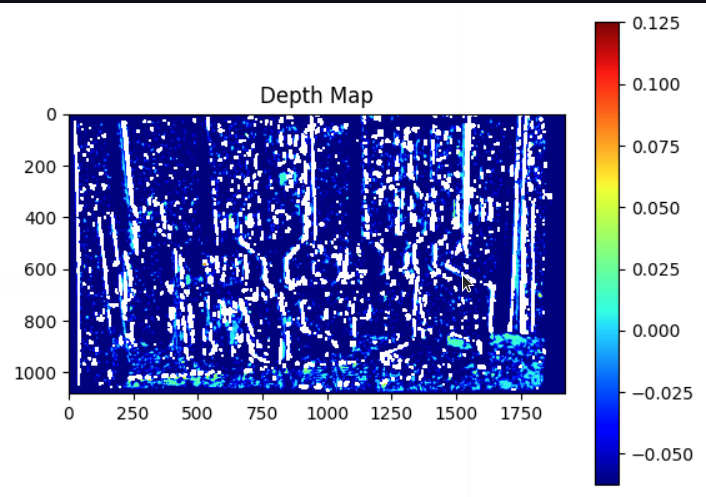

My name is Abhinav Bhamidipati, working at Inception Robotics, I contribute to developing autonomy stacks and enhancing robot capabilities through visual odometry and sensor fusion techniques. My passion lies in Computer Vision and Machine Learning, with experience in Perception, Path Planning, SLAM, and Reinforcement Learning.

Beyond engineering, I'm creative; indulging in sketching and painting. I'm driven by innovation, eager to collaborate, and excited to make meaningful contributions to robotics and beyond.

University of Maryland, College Park, MD

CGPA: 3.84 / 4.0

University of Mumbai, Atharva College of Engineering

CGPA: 8.97 / 10.0